- cross-posted to:

- technology@lemmy.world

- cross-posted to:

- technology@lemmy.world

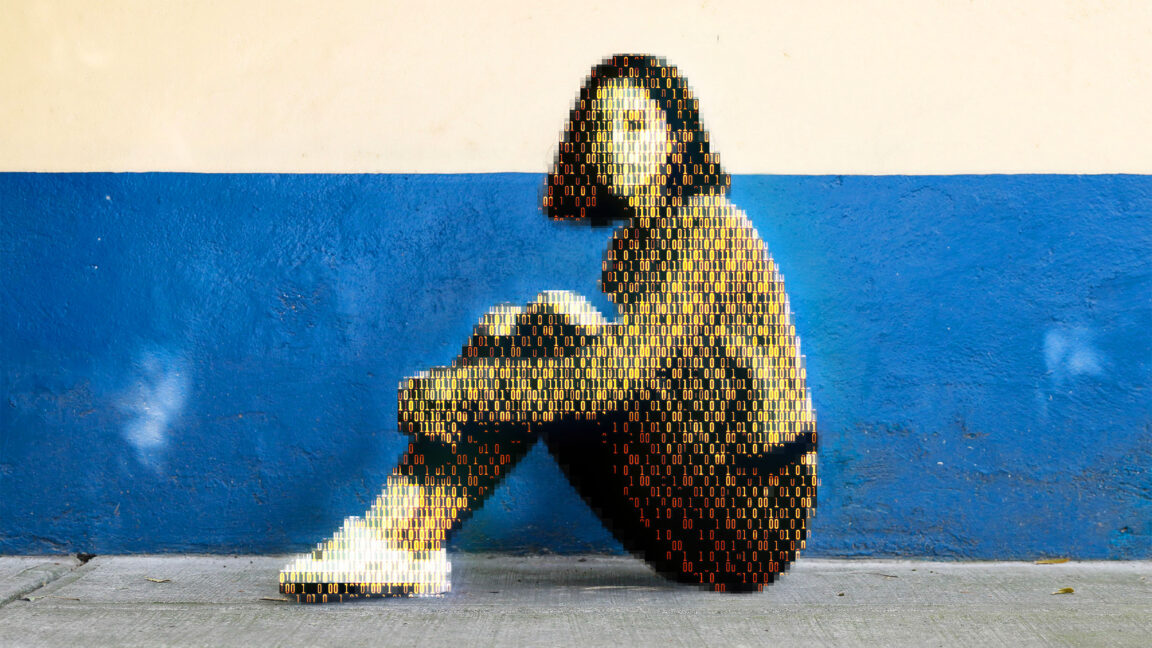

Today, a prominent child safety organization, Thorn, in partnership with a leading cloud-based AI solutions provider, Hive, announced the release of an AI model designed to flag unknown CSAM at upload. It’s the earliest AI technology striving to expose unreported CSAM at scale.

Reminds me of the A cup breasts porn ban in Australia a few years ago, because only pedos would watch that

There was a a porn studio that was prosecuted for creating CSAM. Brazil i belive. Prosecutors claimed that the petite, A-cup woman was clearly underaged. Their star witness was a doctor who testified that such underdeveloped breasts and hips clearly meant she was still going through puberty and couldn’t possible be 18 or older. The porn star showed up to testify that she was in fact over 18 when they shot the film and included all her identification including her birth certificate and passport. She also said something to the effect of women come in all shapes and sizes and a doctor should know better.

I can’t find an article. All I’m getting is GOP trump pedo nominees and brazil laws on porn.

Awe man, I love all titties. Variety is the spice of life.

Not to mention the self image impact such things would have on women with smaller breasts, who (as I understand it) generally already struggle with poor self image due to breast size.

Clearly the state gives zero fucks about these women, or anyone else or even “the children”

Catholic Church is still around for a reason